Specular Differentiation

The Python package specular implements specular differentiation which generalizes classical differentiation.

This implementation strictly follows the definitions, notations, and results in [1] and [2].

A specular derivative (the red line) can be understood as the average of the inclination angles of the right and left derivatives. In contrast, a symmetric derivative (the purple line) is the average of the right and left derivatives. Their difference is illustrated as in the following figure.

Table of Contents

Installation

Requirements

specular-differentiation requires:

- Python >= 3.11

numpy>= 2.0

Additional features are available through optional dependencies:

ode:matplotlib,pandas,tqdmoptimization:matplotlib,tqdmnumba:numbajax:jax,jaxlib

User installation

Standard Installation (NumPy backend)

pip install specular-differentiation

This installs the core specular differentiation API, including A, derivative,

directional_derivative, partial_derivative, gradient, and jacobian.

ODE solvers

pip install "specular-differentiation[ode]"

Optimization routines

pip install "specular-differentiation[optimization]"

Numba backend

pip install "specular-differentiation[numba]"

If Numba is installed and available, the package may use the Numba-accelerated CPU backend.

JAX backend

pip install "specular-differentiation[jax]"

See the backend documentation for JAX-specific usage.

Quick start

The following simple example calculates the specular derivative of the ReLU function \(f(x) = max(0, x)\) at the origin.

import specular

ReLU = lambda x: max(x, 0)

print(specular.derivative(ReLU, x=0))

0.41421356237309515

Backend support

The package is organized around a backend system. NumPy is the default backend, while accelerated backends are optional and may require extra dependencies.

| Backend | Calculation | ODE | Optimization |

|---|---|---|---|

| NumPy | supported | supported | supported |

| Numba | supported | supported (recommended) | not supported |

| JAX | supported | supported | supported (recommended) |

| TensorFlow | experimental | experimental | not supported |

| PyTorch | experimental | experimental | not supported |

Applications

Specular differentiation is defined in normed vector spaces, allowing for applications in higher-dimensional Euclidean spaces.

The specular package includes the following applications.

Ordinary differential equation

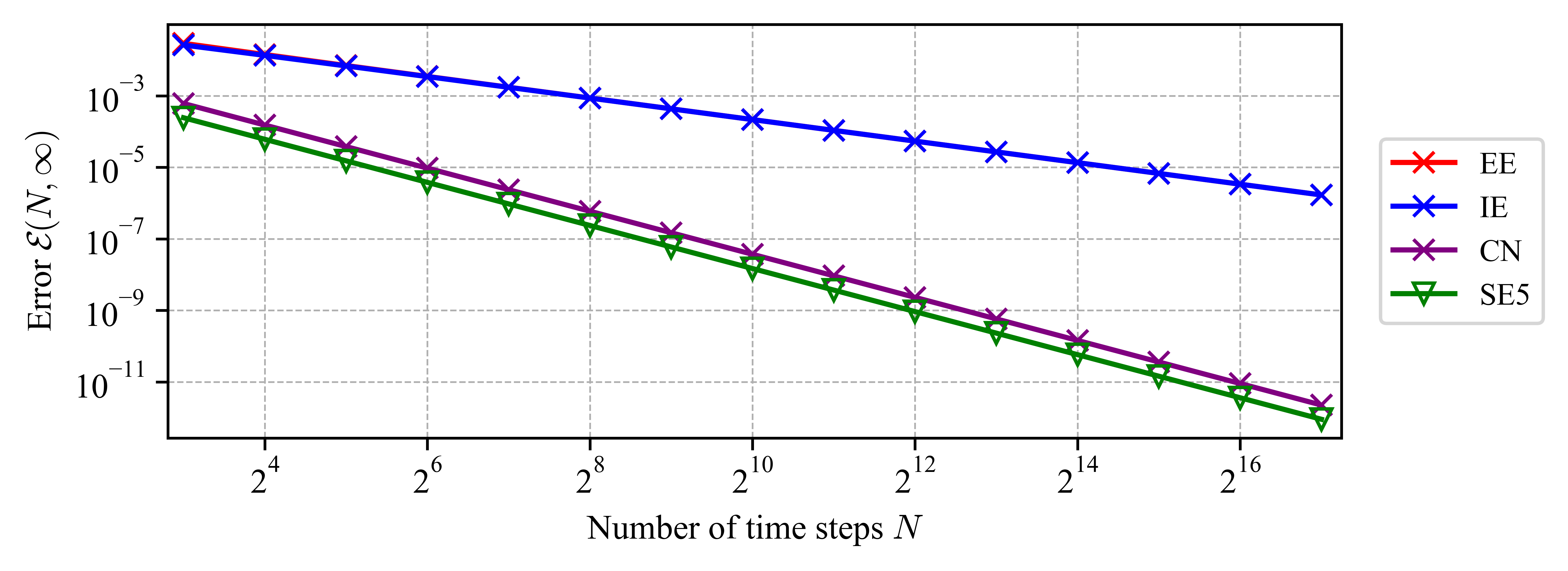

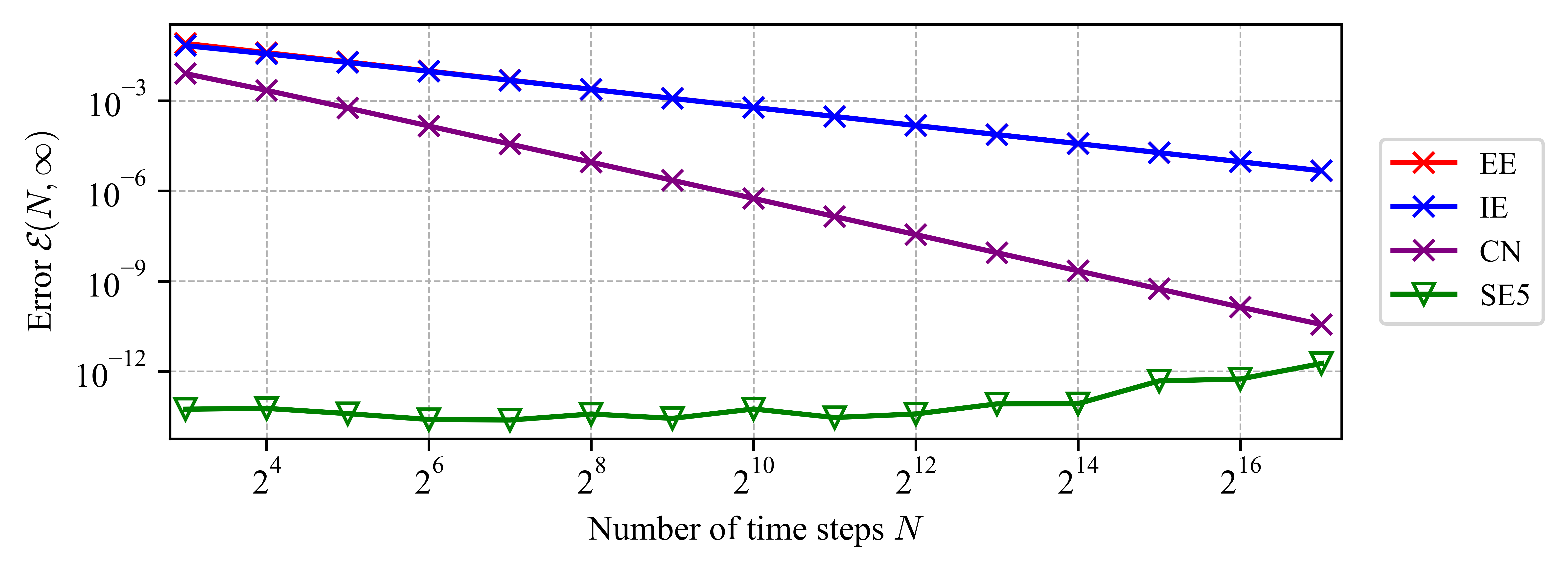

In [1], seven schemes are proposed for solving ODEs numerically:

- the specular Euler scheme of Type 1~6

- the specular trigonometric scheme

The following example shows that the specular Euler schemes of Type 5 and 6 yield more accurate numerical solutions than classical schemes: the explicit and implicit Euler schemes and the Crank-Nicolson scheme.

Optimization

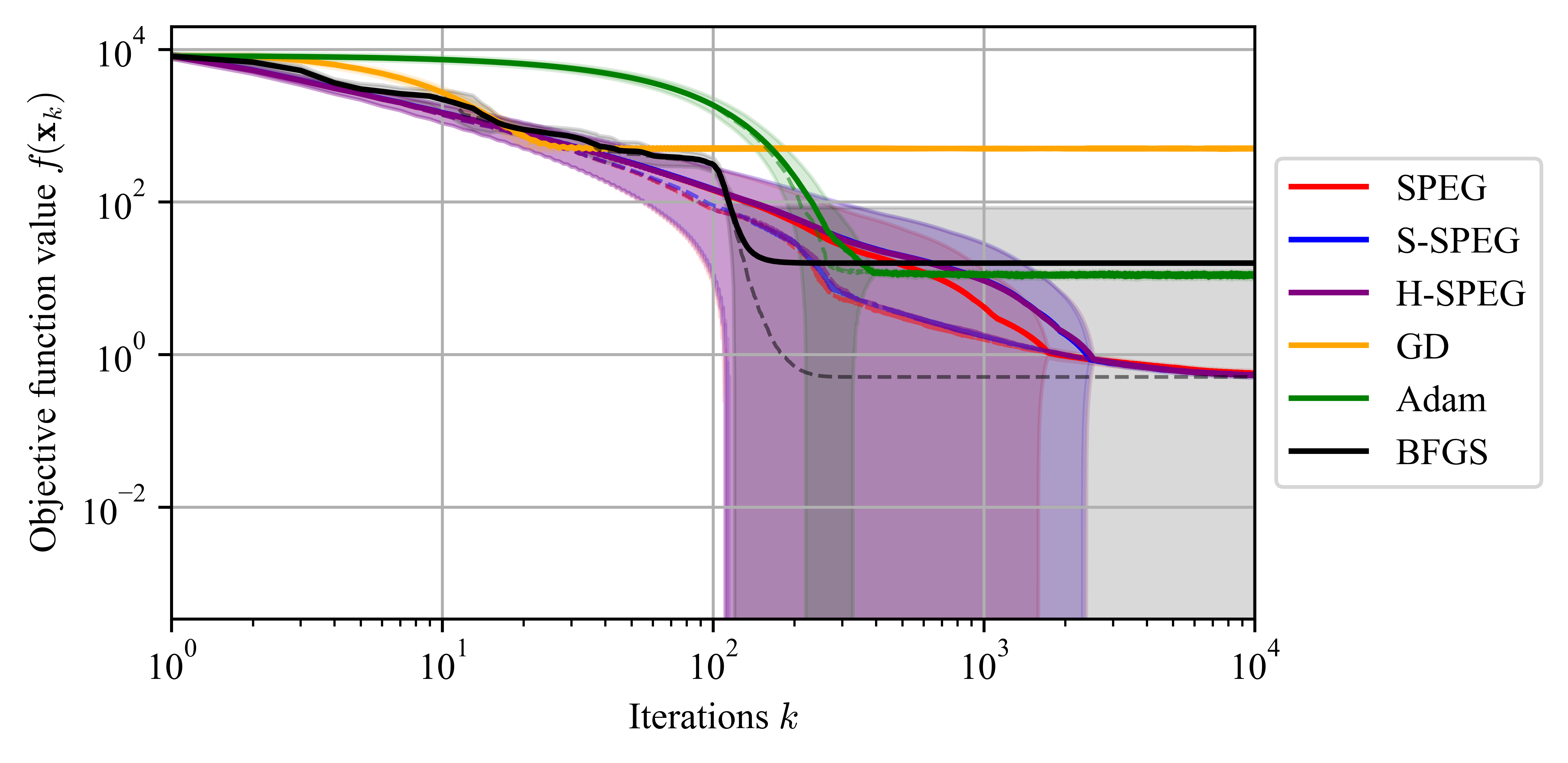

In [2], three methods are proposed for optimizing nonsmooth convex objective functions:

- the specular gradient (SPEG) method

- the stochastic specular gradient (S-SPEG) method

- the hybrid specular gradient (H-SPEG) method

The following example compares the three proposed methods with the classical methods: gradient descent (GD), Adaptive Moment Estimation (Adam), and Broyden-Fletcher-Goldfarb-Shanno (BFGS).

Documentation

Getting Started

API Reference

Examples

LaTeX macro

To use the specular differentiation symbol in your LaTeX document, add the following code to your preamble (before \begin{document}):

% Required packages

\usepackage{graphicx}

\usepackage{bm}

% Definition of Specular Differentiation symbol

\newcommand\sd[1][.5]{\mathbin{\vcenter{\hbox{\scalebox{#1}{\,$\bm{\wedge}$}}}}}

Usage examples

Use the symbol in your document (after \begin{document}):

% A specular derivative in the one-dimensional Euclidean space

$f^{\sd}(x)$

% A specular directional derivative in normed vector spaces

$\partial^{\sd}_v f(x)$

Citing specular-differentiation

To cite this repository:

@software{Jung_specular-differentiation_2026,

author = {Jung, Kiyuob},

doi = {10.5281/zenodo.18246734},

license = {MIT},

month = jan,

title = {{specular-differentiation}},

url = {https://github.com/kyjung2357/specular-differentiation},

version = {1.1.0},

year = {2026},

}

References

[1] K. Jung. Nonlinear numerical schemes using specular differentiation for initial value problems of first-order ordinary differential equations. arXiv preprint arXiv:2601.09900, 2026.

[2] K. Jung. Specular differentiation in normed vector spaces and its applications to nonsmooth convex optimization. arXiv preprint arXiv:2601.10950, 2026.

[3] K. Jung and J. Oh. The specular derivative. arXiv preprint arXiv:2210.06062, 2022.

[4] K. Jung and J. Oh. The wave equation with specular derivatives. arXiv preprint arXiv:2210.06933, 2022.

[5] K. Jung and J. Oh. Nonsmooth convex optimization using the specular gradient method with root-linear convergence. arXiv preprint arXiv:2210.06933, 2024.